Python Strings Equal

String equality is one of those topics that looks junior-level until you’ve debugged the consequences in a real service.

Most advice about python strings equal stops at syntax. That advice is fine for toy scripts and weak for production systems.

A backend doesn’t fail because a developer forgot that "a" == "a" returns True. It fails because login input carries a trailing space, because two visually identical Unicode strings aren’t the same byte sequence, or because a secret token gets compared with the wrong primitive. String equality is one of those topics that looks junior-level until you’ve debugged the consequences in a real service.

Senior engineers treat equality as a design decision. They ask what “equal” should mean in a given context, where normalization belongs, whether comparison is security-sensitive, and how data should be stored so the rest of the codebase stays predictable.

Why String Equality is a Senior Developer Concern #

If a user signs up with [email protected] and later types [email protected] , the bug isn't in the == operator. The bug is in the system design around it. The comparison is only as reliable as the rules you establish before the comparison happens.

That’s why string equality belongs in the same mental category as input validation, schema design, and data modeling. A clean equality strategy prevents duplicate accounts, inconsistent search behavior, fragile API contracts, and hours of debugging that should never have been necessary. The same thinking shows up when you design fast lookups with a hash map, because consistency in representation determines whether two values land in the same logical bucket at all. The broader principle is the same one discussed in this hash map Python guide.

Equality reflects your system contract #

A junior implementation compares whatever raw input arrived most recently. A stronger implementation decides what the canonical representation is, stores that representation, and keeps comparisons boring.

That sounds simple. It is simple. But simplicity at the architectural level takes discipline.

Practical rule: If your equality logic is scattered across controllers, serializers, background jobs, and templates, you don’t have equality logic. You have inconsistency.

In production, every string comparison answers a business question:

- Authentication input: Is this the same credential material the system expects?

- User identity fields: Should case differences matter?

- Search and matching: Should accented and decomposed characters count as equivalent?

- Protocol values: Is exact byte-for-byte sameness required?

Those are different questions. They shouldn’t all share the same comparison policy.

Robust systems define equality upstream #

The strongest pattern is to define equality semantics at the boundary. Normalize at ingestion, store canonical values where appropriate, and make later comparisons straightforward.

That decision reduces branching everywhere else. It also makes tests clearer. Instead of writing endless edge-case assertions across the stack, you put the complexity close to the inputs and preserve clean invariants after that.

The == operator is just the visible tip. The actual engineering work is deciding what kind of sameness your system can defend consistently.

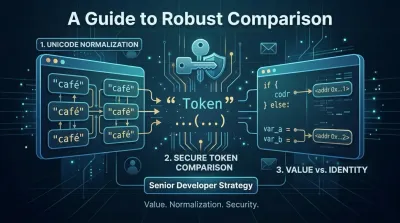

The First Decision Value vs Identity #

When developers search for python strings equal, the first distinction they need to internalize is this: value equality and object identity are not the same thing.

== asks whether two strings have the same content. is asks whether both names point to the exact same object in memory. For application logic, that difference is foundational. One gives you a stable semantic rule. The other exposes an implementation detail.

Python has relied on == for string value comparison since the language began, and Python 3.0 unified str as Unicode-only in December 2008, eliminating Python 2's bytes and str distinction that caused 28% of migration bugs related to equality in GitHub issue stats from 2009 to 2020, as summarized in DigitalOcean's discussion of Python string comparison.

Why is sometimes appears to work #

Understanding string behavior can be tricky for beginners. Python may intern some strings, especially small or repeated literals. This means two separate-looking variables can sometimes reference the same underlying object.

So you might see code like this behave “correctly” in a quick experiment:

- two equal literals compared with

is - a cached string appearing identical by identity

- a REPL session encouraging false confidence

Then the same idea breaks after a refactor, after reading input from a request, or after constructing strings dynamically. The bug isn’t random. The code was relying on memory behavior instead of program meaning.

The architecture lesson #

Code that depends on identity for business logic is brittle by design. It ties correctness to interpreter behavior, optimization strategy, and how a value happened to be created.

That’s not engineering discipline. That’s luck.

A developer who understands memory models knows that identity checks have a place. They’re useful for sentinels like None, singleton objects, or framework-specific markers. They are not the right tool for asking whether two pieces of text mean the same thing.

If you need a refresher on how Python strings behave as data types before you reason about comparison semantics, this Python strings for beginners article covers the foundations well.

The rule you can hand to a junior developer #

Use this decision table:

| Question | Operator | Why |

|---|---|---|

| Do these two strings contain the same text? | == |

Compares value |

| Are these two references the same exact object? | is |

Compares identity |

Am I checking for None? |

is |

None is a singleton |

| Am I writing app logic for user input, API fields, or database values? | == |

Logic should depend on meaning, not memory |

That rule scales. It also teaches something deeper than syntax. It teaches that production code should be correct because its semantics are explicit.

A quick walkthrough can help if this distinction still feels slippery:

Rely on

isfor strings and you’re outsourcing correctness to an optimization you don’t control.

That’s the opposite of predictable backend design.

Normalization The Unsung Hero of Data Consistency #

Most string comparison bugs aren’t really comparison bugs. They’re normalization failures.

One system stores "Alice", another receives " alice ", and a third compares against "ALICE". None of those values are equal as raw strings, but that raw mismatch often isn’t what the business intended. If your application accepts human input, canonicalization has to happen before comparison becomes trustworthy.

Python’s == operator runs in O(n) time for string comparison, and guidance summarized by DigitalOcean's article on Python string equals notes that production applications require input canonicalization before comparison. The same summary states that pre-normalized bulk comparisons execute 3 to 5x faster than raw comparisons on malformed inputs at scale above 100k comparisons, with the recommendation to normalize once at ingestion and then use direct ==.

Normalize once, compare many times #

That pattern matters more than most tutorials admit. If you normalize ad hoc every time you compare, your codebase turns into a minefield of slightly different assumptions.

One request handler strips whitespace. Another lowercases. A background task casefolds but forgets whitespace. A reporting job compares untouched raw values. You now have four equality definitions inside one product.

A stronger pattern is:

- Define canonical form per field

- Apply it at the edge

- Store the canonical representation when the domain allows it

- Use plain

==everywhere else

That design does two things well. It reduces cognitive load for developers, and it reduces accidental mismatch across services.

What normalization usually includes #

For many backend fields, the first pass is straightforward:

- Whitespace cleanup: Use

.strip()when leading and trailing spaces have no meaning. - Case strategy: Use

.lower()only when the domain is narrow and you control the character set. - Global text handling: Prefer

.casefold()when the field may contain real-world language data.

The point isn’t to memorize methods. The point is to decide which transformations preserve business meaning.

Architecture rule: Don’t let every comparison choose its own cleanup policy. Equality rules belong in one reusable normalization path.

.lower() versus .casefold() #

A lot of Python code reaches for .lower() by reflex. That works for plenty of English-only situations, but it’s not the strongest default if your product might ever serve users outside a narrow language context.

.casefold() is the more defensive choice for text matching because it’s designed for caseless comparison across a wider range of Unicode characters. If you’re building account lookup, user-generated content search, or cross-region APIs, .casefold() usually signals the better mindset. You’re designing for the data you have now and the data your system may receive later.

That doesn’t mean every field should become case-insensitive. It means the rule must be explicit.

A practical field-by-field view #

| Field type | Good default | Common mistake |

|---|---|---|

| Username for login | Strip, then apply chosen case policy | Comparing raw request input |

| Email for lookup | Strip surrounding whitespace, then follow your app’s canonicalization policy | Letting formatting differences create duplicates |

| API enum values | Exact canonical set | Accepting loose variants in one endpoint but not another |

| Free-form content | Preserve original text, normalize only for matching/search paths | Destroying original user input unnecessarily |

A mature system usually stores both where needed: the original presentation value and the normalized comparison value. That gives you clean UX without sacrificing consistent matching.

Where developers often put this logic wrong #

The worst place is deep inside business logic. By then, the raw value has already leaked into logs, caches, events, or persistence.

Better placements include:

- Serializer or request validation layer

- Model factory or domain constructor

- Dedicated normalization utilities shared across services

- Ingestion pipeline steps for imported data

That placement turns normalization into infrastructure instead of cleanup.

It also improves learning. When you study backend engineering, this is one of the habits that separates syntax familiarity from actual software design skill. You stop asking, “How do I compare two strings?” and start asking, “Where should the system define sameness so every downstream component stays simple?”

Building Global-Ready Systems with Unicode Normalization #

Many systems pass local tests and still fail globally because developers confuse visual sameness with semantic samenness in Unicode.

Two strings can look identical on screen and still fail ==. A classic example is café written as a single composed character for é versus the same visible text built from e plus a combining accent. Those strings can compare unequal even after .casefold().

Guidance summarized by freeCodeCamp's article on comparing Python strings notes that this café versus decomposed café case can still return False on == even after .casefold(), affecting 15% of multilingual user data per the cited Unicode reports. The same summary warns that ignoring this risks bugs in sorting, matching, or LLM inputs, and mentions a contrarian approach of using unicodedata plus hashing instead of .lower() for global scale.

Unicode normalization is not optional for global products #

If your app handles names, addresses, product catalogs, support tickets, AI prompts, or imported CSV data, it already has an internationalization problem whether you planned for one or not.

The mistake is assuming “we only serve English right now” protects you. It doesn’t. Data crosses borders faster than product strategy documents do. Copy-paste from documents, phones, forms, and external platforms introduces Unicode variation long before a team thinks of itself as international.

Pick a normalization policy deliberately #

Python gives you Unicode normalization forms through unicodedata.normalize(). The key design question isn’t “which one is best in general?” It’s “which one preserves the meaning my domain cares about?”

To approach this practically:

- NFC: Good when you want a standard composed form for storage and comparison.

- NFD: Useful when decomposition matters for specialized processing.

- NFKC / NFKD: More aggressive compatibility normalization, useful when visually or compatibility-equivalent forms should collapse together, but only if that loss of distinction is acceptable in your domain.

This is architecture, not trivia. A search index, a login identifier, and a legal document field may need different choices.

Systems become global before the team feels global. Unicode bugs are often the first proof.

Store raw text and normalized text separately when needed #

This is one of the cleanest patterns for multilingual systems.

Keep:

- Original value for display, audit, and user trust

- Normalized comparison value for deduplication, matching, and indexing

That approach avoids a false choice between preserving user input and keeping your matching logic sane. It also makes migrations safer because you can rebuild derived normalized values without losing the original text.

For high-scale pipelines, some teams also derive comparison keys or hashes from normalized text. That can simplify matching strategies when repeated comparison becomes expensive or when multiple services need the same equality semantics. The important part is consistency. If the normalization policy changes, the derived key strategy must change with it.

Defensive Coding Secure String Comparison #

Not every string comparison is just about correctness. Some are about attack surface.

The regular == operator is appropriate for normal business logic, and Python’s implementation short-circuits on mismatch. That’s part of why direct comparison is efficient for ordinary use. It’s also why you should pause before using it on secrets.

When a comparison stops as soon as it finds a difference, execution time can vary based on how much of the input matched. In security-sensitive contexts, that timing behavior can leak information. An attacker doesn’t need your whole secret at once if your application helps them learn it piece by piece.

Where == is the wrong tool #

Use caution any time the value being compared is sensitive:

- API keys

- Session tokens

- Webhook signatures

- Password reset tokens

- Cryptographic digests

For these cases, use hmac.compare_digest() instead of plain ==. The goal is constant-time style comparison behavior that doesn’t reveal partial-match timing clues.

This is defensive programming in practice. You assume a motivated attacker will observe more than your happy-path tests do.

Security is context, not syntax #

A lot of developers learn “use == for strings” and then apply it everywhere. That’s understandable, but incomplete.

The mature rule is narrower and better: use == for normal value equality after proper normalization, and use a secure comparison primitive for secrets. Same language, different risk model.

That distinction mirrors a broader backend lesson. No tool is universally correct outside context. The same discipline that helps you avoid empty-string bugs in request handling also helps you separate harmless equality checks from dangerous ones. Input validation habits discussed in this guide to the Python empty string often intersect with comparison decisions in auth and validation code.

Defensive comparison pattern #

A good secure path usually looks like this:

-

Validate shape first Reject clearly invalid inputs early, such as missing values or structurally impossible tokens.

-

Normalize only when the protocol permits it Human identifiers often need canonicalization. Cryptographic material usually does not. Don’t “helpfully” lowercase secrets.

-

Compare with the right primitive Use

hmac.compare_digest()for sensitive equality checks. -

Keep secrets out of ad hoc utility code If token comparison is spread across handlers and helpers, somebody will eventually use

==in the wrong place.

Security takeaway: Correctness is not enough for secret comparisons. The comparison method itself must resist information leakage.

One mental model that holds up #

Ask one question before every string comparison: What damage happens if this comparison is subtly wrong?

If the answer is “a user sees a duplicate label,” the fix is probably normalization and cleaner data boundaries. If the answer is “an attacker gains information about a secret,” the fix is stronger than normalization. It requires a different comparison primitive and a more defensive design mindset.

That habit is what turns string handling from a coding detail into security engineering.

From Syntax to Strategy A Developer's Mindset on Equality #

String equality is one of the best small topics for learning how senior engineers think. The syntax is easy. The judgment is where the skill lives.

A weak approach asks, “Which operator compares strings in Python?” A better approach asks, “What should equality mean for this field, this workflow, and this threat model?” Those are backend questions. They involve data contracts, user behavior, storage policy, security boundaries, and internationalization.

The mindset worth building #

When you encounter any new comparison problem, work through it in this order:

| Decision | What to ask |

|---|---|

| Value or identity | Am I comparing meaning or object reference? |

| Raw or canonical | Should these inputs be normalized before they ever reach business logic? |

| Local or global text | Can Unicode variation change the answer? |

| Ordinary or sensitive | Is == safe here, or does this require secure comparison? |

That sequence prevents a lot of bad code. It also makes code review sharper. Instead of arguing over style, teams can ask whether the comparison policy matches the domain.

What strong developers learn from this #

They learn to keep invariants close to the boundary. They learn that predictable systems come from canonical data, not repeated cleanup. They learn that exact equality, user-friendly equality, and secure equality are different categories.

They stop treating implementation details as architecture. == is a tool. The system around it determines whether your application is reliable.

The jump from junior to senior often looks like this. You stop solving the line of code and start designing the conditions that make the line of code reliable.

That’s why python strings equal is a useful topic far beyond beginner syntax. It trains the habit that matters in real engineering. Define meaning clearly, encode it once, and let the rest of the system stay simple.

If you want to build that kind of engineering judgment through practice, Codeling is worth a look. It teaches Python and backend development through structured, hands-on exercises and real projects, so you don’t just memorize syntax. You learn how to think about APIs, data consistency, architecture, and production-ready code the way working backend engineers do.