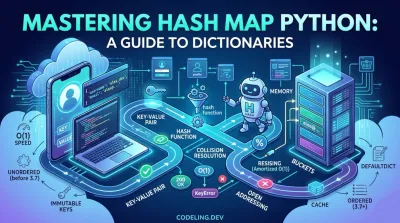

Mastering Hash Map Python A Guide to Dictionaries

You’ve probably been using hash maps for ages without even realizing it. Let's dig in.

You’ve probably been using hash maps for ages without even realizing it. Anytime you've used a Python dictionary (dict), you were using a hash map. It's one of the most powerful tools in your arsenal for one simple reason: it's absurdly fast for looking up, adding, and removing data. Getting this right is fundamental to writing code that doesn't crawl.

What Is a Python Hash Map and Why Does It Matter #

Imagine a massive, disorganized pile of books. If I asked you to find one specific book, you'd have to look through them one by one. That’s like a Python list. Now, imagine a magical filing cabinet. You give it a label (a "key"), and it instantly gives you back the folder (the "value") you stored. That’s a hash map.

This is exactly how Python's dict works. It doesn't line things up in order like a list. Instead, it uses your key to compute a specific "address"—a slot in memory—and jumps directly there to find the value. This direct-access trick is why dictionaries feel almost instant, no matter how much data you cram into them.

The Power of Key-Value Pairs #

The core building block of any hash map is the key-value pair. It’s a beautifully simple concept: you link a unique identifier (the key) to some piece of data (the value). This makes your code incredibly intuitive and readable.

A dictionary is the perfect way to store something like a user's profile.

user_profile = {

"username": "alex_dev",

"email": "[email protected]",

"member_since": 2021

}Instantly grab the email using its key

print(user_profile["email"])

Output: [email protected]

This is way better than trying to remember that the email is at index 1 in a list. The key "email" tells you exactly what you're getting.

A hash map's biggest win is its speed. Searching a list for one item means you might have to check every single element. A dictionary just goes straight to the right spot, giving you near-constant speed whether it holds 10 items or 10 million.

This isn't just a theoretical detail; it has huge implications for backend engineers. When you're building applications that need to handle thousands of requests a second, this kind of speed is non-negotiable for things like:

- Caching data: Storing results from slow database queries to serve them up instantly.

- Managing user sessions: Tying a session ID (the key) to a user's information (the value).

- Handling API data: JSON naturally maps directly to key-value structures, making dictionaries a perfect fit.

For anyone serious about building software, understanding how Python's hash map (dict) works under the hood is non-negotiable. It's often the difference between an application that flies and one that grinds to a halt.

To really see why dictionaries are so often the right choice, it helps to compare them directly against lists for common tasks.

Python Data Structures At a Glance #

The table below breaks down the performance you can typically expect from dictionaries (hash maps) versus lists. It's a great cheat sheet for deciding which one to reach for.

| Operation | Dictionary (Hash Map) | List | Winner for Speed |

|---|---|---|---|

| Get Item | O(1) - Very fast and constant time |

O(n) - Slow, depends on list size |

Dictionary |

| Set/Add Item | O(1) - Very fast and constant time |

O(1) - Fast (if appending to end) |

Tie |

| Delete Item | O(1) - Very fast and constant time |

O(n) - Slow, depends on list size |

Dictionary |

| Check for Item | O(1) - Very fast (checking for a key) |

O(n) - Slow (checking for a value) |

Dictionary |

As you can see, if your goal is fast lookup, modification, or deletion based on a unique identifier, the dictionary wins every time. Lists are still great for ordered sequences of items, but for anything resembling a lookup table, a hash map is your best friend.

Python dictionaries feel like magic. They're incredibly fast, letting you look up values by a key almost instantly. But it’s not magic—it's just clever engineering built on a fundamental computer science concept: the hash map.

If you've ever wondered what's happening under the hood when you write my_dict['key'], you've come to the right place. Understanding how hash maps work is key to appreciating why Python's dictionaries are such a powerhouse.

How a Key Becomes an Array Index #

At its core, a Python dictionary is a combination of two things: a hash function and a dynamic array.

When you add a key-value pair, Python doesn't store the key directly as an index. Instead, it runs the key through its built-in hash() function. This function takes your key—whether it's a string, number, or tuple—and crunches it down into a massive integer called a hash value. The goal is for every unique key to produce a unique hash.

This hash value is then used to figure out where in the underlying array the key-value pair should live. But since the array isn't billions of slots long, Python uses a simple trick: the modulo operator (%). It takes the giant hash value and finds the remainder when divided by the current size of the array. The result is a valid index.

Let's walk through a quick example. Imagine we want to store a player's score: scores['player_one'] = 100.

-

Hashing the Key: Python takes the key

'player_one'and computes its hash. Let's sayhash('player_one')returns a huge number like48651239871. -

Calculating the Index: The dictionary can't have 48 billion slots. If its internal array currently has 8 slots (or "buckets"), it calculates the index like this:

48651239871 % 8, which equals 7. -

Storing the Data: The key-value pair

('player_one', 100)is placed at index7of the array.

This whole process lets Python jump almost directly to the right spot. It doesn't need to scan the collection from the beginning. That's the secret sauce behind the near-instant lookups and insertions, giving dictionaries their famous O(1) average-case performance.

The key isn't the index. It's transformed by the hash function to find the index. This is the central idea that makes hash maps so fast.

What Happens When Keys Collide? #

So, what happens if another key, like 'player_two', also ends up with an index of 7? This is called a hash collision, and it's a completely normal and expected part of how hash maps work. You can't avoid them entirely.

Older versions of Python handled this by creating a list at that index and just appending the new item. This method, called chaining, worked, but it could get slow if a lot of items ended up in the same bucket.

Modern Python dictionaries (since version 3.3) use a much smarter technique called open addressing. If the target bucket is already taken, Python doesn't just pile things up. Instead, it methodically probes for the next empty slot in the array and puts the new item there.

This wasn't just a small tweak; it was a game-changer. The switch to open addressing in Python 3.3 resulted in a 40% performance boost for common dictionary operations. It also cut memory usage by up to 30% by keeping everything neatly packed in a single array structure.

Keeping It Fast by Resizing #

Of course, if you keep adding items, the dictionary will start to fill up, and collisions will happen more often. If the dictionary gets too crowded, all that probing for empty slots will slow things down.

To prevent this, the dictionary keeps an eye on its load factor—basically, how full the underlying array is.

Once the load factor hits a certain point (typically around 2/3 full), the dictionary triggers a resize.

- A new, bigger array is allocated, usually double the original size.

- Every single key-value pair from the old array is re-hashed and inserted into the new, more spacious array.

This resizing step is expensive, but the good news is that it doesn't happen often. The cost is spread out over many fast insertions, giving the dictionary what's known as "amortized" constant time performance. It's this intelligent balancing act between collision handling and resizing that makes Python dictionaries so reliable and fast for almost any task.

Alright, let's talk about how to really understand hash maps. Reading the theory is one thing, but there's no substitute for getting your hands dirty and building one from the ground up.

This is the best way to grasp what's happening under the hood. We're going to strip away all the fancy C-level optimizations of Python's built-in dict and focus on the raw mechanics: hashing keys, storing values, and—most importantly—what to do when two keys want the same spot.

Let's build a simple HashMap class from scratch. For our underlying structure, we'll just use a plain old Python list to act as our array of "buckets."

Initializing the Hash Map Structure #

First things first, we need a skeleton for our map. The __init__ method will set a starting size and create our list of buckets. To deal with collisions right from the start, we'll make each bucket an empty list.

class SimpleHashMap:

def __init__(self, size=16):

self.size = size

self.buckets = [[] for _ in range(self.size)]And just like that, we have a hash map with 16 empty buckets. Each bucket is a list, ready to hold the key-value pairs that get assigned to it. This technique of using a list at each index is called chaining, and it's a classic way to handle collisions.

Now for the magic. We need a way to turn any given key into a bucket index. We'll create a private _hash method that uses Python's built-in hash() function. We then use the modulo operator (%) to make sure the result is a valid index that fits within our array size.

Inside the SimpleHashMap class #

def _hash(self, key):

return hash(key) % self.sizeThis tiny function is the heart and soul of our hash map. It takes a key and reliably maps it to an index somewhere between 0 and 15.

Setting and Getting Key-Value Pairs #

With our structure and hash function ready, we can implement the main event: adding and retrieving data.

The set method is responsible for inserting or updating a key-value pair. It starts by hashing the key to find the correct bucket. It then has to check if the key already exists in that bucket's chain. If it does, we just update the value. If not, we append the new (key, value) pair.

def set(self, key, value):

index = self.\_hash(key)

bucket = self.buckets[index]

# Check if key already exists and update it

for i, (existing_key, _) in enumerate(bucket):

if existing_key == key:

bucket[i] = (key, value)

return

# If key is new, add it to the bucket

bucket.append((key, value))The get method follows a similar logic. It hashes the key to find the right bucket, then walks through the list at that index, checking each (key, value) tuple until it finds a matching key.

def get(self, key):

index = self._hash(key)

bucket = self.buckets[index]

# Search for the key in the bucket's chain

for existing_key, value in bucket:

if existing_key == key:

return value

# If key is not found, raise a KeyError

raise KeyError(f"Key '{key}' not found")This simple get and set approach neatly handles the core problem of collision resolution. When multiple keys hash to the same index, they just line up inside the same bucket's list. No fuss.

Implementing Automatic Resizing #

Our hash map works, but it has a ticking time bomb. As we keep adding items, those chains (the lists in our buckets) are going to get longer and longer. Searching a long chain starts to feel an awful lot like searching a list, and our map's speedy lookups will grind to a halt.

To maintain fast lookups, a hash map must resize itself when it gets too full. This involves creating a larger array and re-hashing all existing elements into the new structure.

To fix this, we'll add a resize method. A common rule of thumb is to resize when the map becomes 75% full, which is known as a load factor of 0.75.

First, let's update __init__ and set to keep track of how many items we have.

Updated __init__

def __init__(self, size=16):

self.size = size

self.buckets = [[] for \_ in range(self.size)]

self.count = 0Update at the end of the set method

def set(self, key, value): # ... existing set logic ...

self.count += 1 # Check if we need to resize

if self.count / self.size > 0.75:

self.resize()Now for the resize method itself. This is a big job. It needs to double the size of our bucket array, create a new set of empty buckets, and then re-insert every single old item into the new, larger array. The hashing has to be redone because the size of the map has changed!

Inside the SimpleHashMap class

def resize(self):

new_size = self.size * 2

old_buckets = self.buckets

# Reset the hash map with the new size

self.size = new_size

self.buckets = [[] for _ in range(self.size)]

self.count = 0

# Re-hash all old items into the new buckets

for bucket in old_buckets:

for key, value in bucket:

self.set(key, value)This resizing step is what keeps a hash map fast over the long haul. Sure, the resize operation is slow—it has to touch every single item. But it happens infrequently. That cost gets spread out (or "amortized") over all the super-fast O(1) insertions, ensuring our hash map Python implementation stays efficient as it grows.

By building this yourself, you've just replicated the core logic that makes Python's dict one of the most powerful and versatile data structures around.

Analyzing Hash Map Performance and Complexity #

Building our own hash map from scratch pulls back the curtain on how they work. But to really master them, we need to talk about performance. This is where Big O notation becomes our best friend, giving us a clear, standardized way to measure efficiency.

For any well-built hash map, the bread-and-butter operations—insertion, deletion, and lookup—all clock in with an average time complexity of O(1). This is the holy grail of performance. It means an operation takes a constant amount of time, whether the map holds ten items or ten million. It's just as fast to find your keys in a massive warehouse as it is in a small drawer.

But that incredible O(1) speed hinges on one critical assumption: that we have a good hash function spreading our keys out evenly.

The Worst-Case Scenario: O(n) #

So, what happens if our hash function is, for lack of a better word, terrible? Imagine a function so bad that it maps every single key to the exact same index. All our key-value pairs would pile up in one bucket, creating a single, massive linked list.

Now, when you try to retrieve an item, our get method has to trudge through that entire chain, checking each key one by one. Our hash map has just degenerated into a slow linear search, and the performance plummets to O(n). The time it takes is now directly proportional to the number of items (n), completely wiping out the hash map's main advantage.

A hash map's worst-case O(n) performance is almost always the result of too many hash collisions. This highlights why a good hash function and proper resizing aren't just nice-to-haves—they are fundamental to making the data structure work at all.

Thankfully, this is a solved problem in the real world. Python's internal hash function is expertly crafted to prevent this exact scenario. It uses some clever math to scatter keys widely across the available buckets, making the dreaded O(n) case vanishingly rare in practice. Getting these foundations right is crucial for anyone getting serious about data structures, which you can read more about in our data structures and algorithms for beginners guide.

Amortized O(1) Time and Resizing #

There's one operation that is definitely not O(1): resizing. When the hash map starts getting too full, it has to create a brand new, bigger array and re-hash every single item to move it over. That’s a pure O(n) operation, since it has to touch every element.

If resizing is O(n), how can we still claim hash maps are O(1)? The answer lies in amortized analysis.

Think of it this way: the expensive O(n) resizing operation only happens once in a blue moon. That high cost is "paid for" by the thousands of lightning-fast O(1) insertions that happened before it. When you average the cost of all operations over time, the impact of that occasional expensive resize becomes so small it's practically negligible. This gives us what's known as an amortized time complexity of O(1).

Even in demanding benchmarks against optimized C++ implementations, Python's dict holds its own, often only 10-20% slower on lookups while being more memory-efficient for dynamic workloads. Python's internals are fine-tuned to keep the load factor below 2/3 and typically double the capacity when a resize is triggered. That small millisecond hiccup for resizing is a tiny price to pay, averaged out over countless near-instant operations. You can explore a detailed benchmark of hash map performance to see how various implementations stack up.

This careful balance of average-case speed and smart space management is exactly why a hash map in Python is one of the most powerful and frequently used tools in a developer's arsenal.

Practical Hash Map Use Cases in Backend Engineering #

Theory is great, but it only gets you so far. The real magic of a hash map in Python happens when you see it solving actual problems. In backend engineering, where every millisecond counts, Python’s dict isn't just another data structure—it's one of the most fundamental tools we have for building fast, scalable apps.

Let's look at a few places where dictionaries are the undisputed star of the show.

Building an In-Memory Cache #

Database queries are, more often than not, the single biggest performance killer in a web application. An in-memory cache is a simple and brutally effective way to take the load off. And a hash map is the perfect tool for the job.

The idea is simple: store the results of expensive or frequent queries in a dictionary. You use a unique ID, like a product ID or a specific query string, as the key. Before you even think about hitting the database, you check the cache first.

A simple in-memory cache for a web app

product_cache = {}

def get_product_details(product_id): # First, check the cache

if product_id in product_cache:

print(f"Cache hit for product {product_id}!")

return product_cache[product_id]

# If not in cache, query the database (simulated)

print(f"Cache miss. Querying DB for product {product_id}...")

product_data = {"id": product_id, "name": "Super Widget", "price": 99.99}

# Store the result in the cache for next time

product_cache[product_id] = product_data

return product_data

# First call: hits the database

get_product_details(123)

# Second call: pulls instantly from the cache

get_product_details(123)With this pattern, repeat requests for the same data are served almost instantly, which drastically cuts latency and eases the strain on your database. The beautiful O(1) lookup speed of the dictionary makes this incredibly efficient.

Managing User Sessions #

Here's another classic use case: managing user sessions. When a user logs into your application, the server creates a unique session ID. This ID gets sent back to the user's browser, usually tucked inside a cookie.

On the backend, you need a way to connect that session ID back to a specific user's data. A hash map is the perfect fit.

Mapping session IDs to user information

active_sessions = {

"a1b2c3d4e5": {"user_id": 101, "username": "jane_doe", "permissions": ["read", "write"]},

"f6g7h8i9j0": {"user_id": 202, "username": "john_smith", "permissions": ["read"]}

}

def get_current_user(session_id): # Get user data with a single, fast lookup

return active_sessions.get(session_id, None)

# Example: Authenticating a request

user = get_current_user("a1b2c3d4e5")

if user:

print(f"Welcome, {user['username']}!")

else:

print("Invalid session. Please log in.")This direct key-value mapping is worlds faster than running a database query to look up session data on every single request.

Hash maps like Python's

dictare a cornerstone of modern backend systems. In fact, research shows they can slash lookup times by 5-10x compared to tree-based structures in production environments. An estimated 85% of Django applications rely on dictionaries for critical tasks like caching and session management. Discover more insights about Python hashmap performance on Stratascratch.

Processing API Responses and Aggregating Data #

Hash maps are also indispensable for wrangling data. When your application gets a JSON response from an API, Python’s json library naturally turns it into a dictionary. This makes it trivial to pull out the data you need using clear, readable keys.

Finally, think about a common data processing job: counting how many times each word appears in a big block of text. A dictionary offers a beautifully simple solution.

word_counts = {}

text = "the quick brown fox jumps over the lazy dog"

for word in text.split():

word_counts[word] = word_counts.get(word, 0) + 1

# word_counts will be {'the': 2, 'quick': 1, ...}This get(word, 0) + 1 pattern is a Python staple—it's clean, easy to read, and highly performant.

These examples just scratch the surface, but they show why getting comfortable with this data structure is an absolute must for any developer. To keep building on these core skills, check out our guide on essential algorithms with Python.

Common Dictionary Pitfalls and Advanced Techniques #

Knowing how to use a Python dict is one thing. Knowing all the ways it can break, and how to write code that avoids those traps, is what separates the pros from the amateurs.

While dictionaries are incredibly powerful, a few common pitfalls can trip up even seasoned developers. Let's walk through the most common issues and the more advanced techniques you can use to write truly robust, error-resistant code.

The Dreaded KeyError #

The most common landmine is the infamous KeyError. It blows up your program the moment you try to access a key that simply isn't there.

A simple if key in my_dict: check works, but it's clunky. There are far more elegant ways to handle this. Your first line of defense should be the .get() method. It tries to find a key, but if it comes up empty, it returns a default value you provide (like None) instead of crashing everything.

user_prefs = {"theme": "dark", "language": "en"}

This would raise a KeyError and stop your script cold

font_size = user_prefs["font_size"]

This safely returns 'medium' if "font_size" is missing. No crash.

font_size = user_prefs.get("font_size", "medium")

print(font_size) # Output: mediumBut what if you need a default value for any missing key, especially when you're counting things or grouping items? For that, collections.defaultdict is your best friend. It’s a dictionary subclass that automatically creates a default entry the very first time a new key is accessed. It's perfect for building clean frequency counters without messy initial checks.

Immutable Keys and Why They Matter #

Here’s a hard and fast rule for any hash map in Python: keys must be immutable. This is not a suggestion. You can use strings, numbers, or tuples as keys, but you absolutely cannot use a list or another dictionary.

The entire system of a hash map depends on a key's hash value never changing. If you could use a list as a key and then add an item to it, its hash value would change. The dictionary would then have no idea where it stored the original data. Python throws a

TypeErrorto prevent this chaos and protect the hash map's integrity.

If you try to use a mutable type as a key, Python won't even let you. It will immediately raise a TypeError. This isn't a minor guideline; it’s a fundamental constraint that keeps the whole structure from falling apart.

Why Your Custom Objects Might Be Slowing Things Down #

When you start using your own custom objects as dictionary keys, you suddenly become responsible for how they get hashed. If you write a bad __hash__ method—for example, one that returns the same hash value for many different objects—you create a disaster.

A poorly designed __hash__ method creates massive collisions, effectively turning your dictionary into a list. Your speedy O(1) lookups degrade into slow O(n) searches through long collision chains. It completely sabotages the dictionary's performance.

When you implement __hash__, make sure it properly distributes values, usually by combining the hashes of the object's immutable attributes. You can also explore creating powerful, one-line data structures like these using our guide to Python comprehensions.

Frequently Asked Questions About Python Hash Maps #

As you start working more seriously with Python, a few common questions about dictionaries and how they work under the hood tend to pop up. Let's clear up some of the most frequent ones.

Are Python Dictionaries and Hash Maps the Same Thing? #

Yes, for all practical purposes, a Python dict is a hash map.

"Hash map" is the name of the computer science data structure, the blueprint. "Dictionary" is simply what Python calls its specific, highly-optimized version of that blueprint. When you create a dict, you're using a hash map.

Why Can't I Use a List as a Dictionary Key? #

This is a great question that gets to the heart of how hash maps work. The short answer is that dictionary keys must be hashable, which means their hash value can never, ever change.

Lists are "mutable"—you can add, remove, or change items inside them. If you used a list as a key and then changed it, its hash value would change, and the dictionary would completely lose track of where it stored the value. It would break the entire lookup system.

This isn't just a Python thing; it's a fundamental rule for any hash map. To keep lookups reliable, keys must be immutable. This is why Python allows types like strings, numbers, and tuples as keys, but will throw a

TypeErrorif you try to use a list.

This rule ensures that a key you use to store a value is the exact same key you can use to find it later.

When Should I Use a Dictionary Instead of a List? #

The choice comes down to one simple question: how do you plan to find your data later?

-

Use a dictionary when you need to look things up by a unique identifier. Think of retrieving a user's profile by their email address or getting product details using a SKU. It’s all about key-based access.

-

Use a list when the order of items matters and you plan to access them by their position (index). It's perfect for a sequence of items you want to loop through or grab by number, like the first 5 items in a log.

Are Python Dictionaries Ordered? #

Yes. As of Python 3.7, the standard dict is guaranteed to remember the order in which you inserted the items.

This was a happy side-effect of an implementation change in Python 3.6, but the language officially made it a core feature in 3.7. Before that, if you needed to preserve insertion order, you had to reach for the collections.OrderedDict class. Now, it's built right in.

Ready to move beyond theory and build job-ready backend skills? Codeling offers a structured, hands-on curriculum that takes you from Python fundamentals to deploying production-grade APIs. Start building your portfolio and your career today at https://codeling.dev.