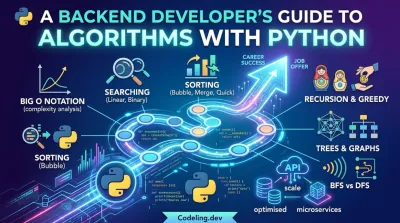

A Backend Developer's Guide to Algorithms With Python

If you want to build backend systems that are fast, scalable, and don't fall over under pressure, you need to get good at algorithms.

I'm not talking about memorizing abstract theory for a whiteboard interview. I’m talking about the practical skill of solving complex problems efficiently—and Python's straightforward syntax makes it the perfect tool for the job.

Why Python Algorithms Are a Backend Superpower #

Think of a massive, disorganized warehouse. If you need to find one specific package, you could wander around checking every shelf. It would work, eventually. But it's painfully slow and inefficient. Now, imagine an Amazon fulfillment center where robots and intelligent systems guide you to the exact item in seconds.

That’s what algorithms do for backend engineering. Your data is the inventory, and your algorithms are the logistics systems that manage it all. Learning algorithms with Python is about transforming that chaotic warehouse into a high-speed operation that can handle anything you throw at it.

From Theory to Tangible Skills #

Getting good at algorithms isn't an academic exercise; it's about building a mental toolkit for real-world problem-solving. When you learn to implement these concepts in clean, idiomatic Python, you're preparing for the daily challenges you'll face as a backend developer.

These skills have a direct payoff:

- Building Fast APIs: A sharp search algorithm can slash database query times, which means faster API responses and happier users. Nobody likes a loading spinner.

- Managing Large Datasets: Ever wonder how business intelligence dashboards instantly process and display huge amounts of data? It all comes down to smart sorting and data structuring algorithms.

- Creating Scalable Systems: An inefficient algorithm might work fine with 100 users, but it can bring your entire system to its knees at 10,000. Well-designed algorithms ensure your app stays fast and stable as you grow.

This practical focus is a huge reason Python has taken over. Its rise was capped when it officially surpassed JavaScript as the most-used programming language, according to Stack Overflow’s 2025 Developer Survey. This wasn't a small shift; it was driven by a massive 7 percentage point increase in usage from 2024 to 2025, largely thanks to its dominance in backend development and AI. You can dig into more software development statistics and trends to see the full picture.

Building Your Backend Portfolio #

At the end of the day, your goal is to build software that works beautifully under the hood. A portfolio that proves you can solve problems with clean, efficient code is what gets you hired.

By focusing on algorithms, you’re not just learning to code; you’re learning to engineer solutions. You demonstrate an understanding of performance trade-offs, scalability, and system design—the core competencies of a great backend developer.

This guide is designed to get you there. We'll walk through implementing essential algorithms in Python, showing you exactly how they power the backend features you use every day. You’ll move beyond writing simple scripts and start building the kind of robust, high-performance applications that define a successful engineering career.

The Essential Toolkit: Search and Sort Algorithms #

At some point, every backend developer has written code that felt fast on their machine, only to watch it crumble under real-world load. Two of the most common culprits behind this are inefficient searching and sorting. Getting these right is one of the first major hurdles in building high-performance backend systems.

Think about it like this: you need to find a single book in a massive, unorganized library. A linear search is the brute-force approach. You start at the first aisle, check every single book on every shelf, one by one. It’s thorough, but it's a nightmare. If the book is on the very last shelf, you've wasted a ton of time.

Now, imagine the library is perfectly alphabetized by title. You can use a binary search. You go straight to the middle, see if your book's title comes before or after that point, and instantly eliminate half the library. You repeat this, halving the search area each time, until you zero in on the exact location. The difference in speed isn't just noticeable; it's monumental.

This is the core of what we're talking about. The right algorithm separates a clunky, slow application from a fast, responsive one. Learning to wield these tools in Python is a fundamental step toward becoming a pro.

The payoff for mastering these concepts is huge, impacting your code's performance, its ability to scale, and ultimately, your value as an engineer.

Time and Space Complexity: The Pro's Measuring Stick #

So how do we compare algorithms in a standardized way? We use Big O notation. It's not about measuring the exact runtime in seconds, but about understanding how an algorithm's performance changes as the amount of data grows.

We look at two primary metrics:

- Time Complexity: How does the runtime scale as the input size (

n) increases? - Space Complexity: How much extra memory does the algorithm need as the input size (

n) grows?

Our library-walking linear search has a time complexity of O(n). In the worst-case scenario, you have to look at all n items. The much smarter binary search, however, is O(log n). This means that even if the library doubles in size, it only takes one extra step to find the book. That’s a massive win for scalability.

Essential Searching Algorithms in Python #

Let's see what these look like in actual Python code. A linear search is dead simple to write, which is its main appeal. It’s fine for tiny, unsorted lists, but it's a performance trap for anything larger.

def linear_search(data, target):

"""

Finds a target by checking every single element.

Time Complexity: O(n)

"""

for i in range(len(data)):

if data[i] == target:

return i # Found it! Return the index.

return -1 # Nope, not here.Binary search is dramatically more efficient, but it has one non-negotiable rule: the data must be sorted first. It works by constantly narrowing its focus on the part of the list where the target could be.

def binary_search(sorted_data, target):

"""

Finds a target in a \_sorted* list by halving the search space.

Time Complexity: O(log n)

"""

low = 0

high = len(sorted_data) - 1

while low <= high:

mid = (low + high) // 2

guess = sorted_data[mid]

if guess == target:

return mid

elif guess < target:

# The target is in the right half

low = mid + 1

else:

# The target is in the left half

high = mid - 1

return -1A Guide to Sorting Algorithms #

Sorting data into a logical order is a foundational task in backend engineering. It’s often the necessary first step before you can run an efficient search or process records in a predictable way.

While Python has fantastic built-in tools like .sort() and sorted(), knowing what's happening under the hood is what separates a good developer from a great one. (For a deep dive, check out our guide on how to sort in Python).

Bubble Sort is the first sorting algorithm most people learn. It repeatedly steps through a list, compares adjacent items, and swaps them if they're in the wrong order. With a time complexity of O(n²), it's brutally inefficient for any real-world use case, but it’s a fantastic first step for understanding how sorting works.

For practical applications, you'll almost always turn to more advanced algorithms like Merge Sort and Quick Sort. They both use a powerful "divide and conquer" strategy, but they go about it differently.

- Merge Sort: This algorithm splits the list in half, recursively sorts each half, and then merges the two sorted halves back together. It's incredibly reliable, with a guaranteed worst-case time complexity of O(n log n). It's also "stable," meaning if you have two equal elements, their original order is preserved.

- Quick Sort: This one picks a "pivot" element and rearranges the array so that all elements smaller than the pivot come before it, and all elements larger come after. It then recursively sorts the sub-arrays. It's often faster than Merge Sort on average, but its worst-case performance can dip to O(n²) if you get unlucky with your pivot choices.

To help you keep these straight, here's a quick comparison table for the sorting algorithms we’ve discussed.

Comparing Common Sorting Algorithms #

| Algorithm | Best Case Time Complexity | Average Case Time Complexity | Worst Case Time Complexity | Space Complexity |

|---|---|---|---|---|

| Bubble Sort | O(n) | O(n²) | O(n²) | O(1) |

| Merge Sort | O(n log n) | O(n log n) | O(n log n) | O(n) |

| Quick Sort | O(n log n) | O(n log n) | O(n²) | O(log n) |

This table is a great cheat sheet, but the real skill is developing the intuition for which algorithm to reach for. Do you need the stable, predictable performance of Merge Sort for a critical financial transaction system? Or is the typical speed of Quick Sort better for a less critical internal tool? These are the kinds of decisions that define an experienced backend engineer.

Alright, you've got the hang of searching and sorting. Now we're moving up a level. Many backend problems aren't simple, linear lists. They're more like puzzles nested inside other puzzles, where solving one piece reveals another. This is where you need to reach for more advanced strategies like recursion and greedy algorithms.

These aren't just new functions to memorize; they're entirely new ways of thinking about how to solve a problem. They'll become essential tools in your Python arsenal.

Let's break down how these two powerful approaches work and when you should use them.

The Power of Recursion #

Think about a set of Russian nesting dolls. To get to the smallest doll, you open the biggest one, which has a slightly smaller doll inside. You open that one, and so on, until you finally reach the tiny, solid doll at the center.

Recursion is the programming version of this. It's a function that calls itself to solve smaller, identical versions of the main problem.

The absolute key to making recursion work is the base case—this is your "smallest doll." It's the condition that tells the function to stop calling itself. Without a base case, the function would just call itself forever, quickly eating up all your system's memory and causing a dreaded "stack overflow" error.

A classic example is calculating a factorial. The factorial of a number n (written as n!) is just the product of all positive integers up to n. So, 5! is 5 * 4 * 3 * 2 * 1. But look closer: 5! is really just 5 * 4!, and 4! is just 4 * 3!. This self-referential pattern is a perfect fit for recursion.

def factorial(n):

"""Base case: The factorial of 1 is 1. This stops the recursion."""

if n == 1:

return 1 # Recursive step: Call the function with a smaller version of the problem.

else:

return n \* factorial(n - 1)Example: print(factorial(5)) will output 120

When you call factorial(5), it calls factorial(4), which calls factorial(3), all the way down until it hits the base case at factorial(1). Then, the chain of results is multiplied back up to give you the final answer.

The Greedy Algorithm Strategy #

While recursion breaks big problems down, a greedy algorithm builds a solution up. It does this by making the most tempting choice at each immediate step. It doesn't look ahead or worry about future consequences; it just picks what looks best right now. Think of it as a brilliantly simple, short-sighted strategy that often works surprisingly well.

The best real-world analogy is making change. If you need to give someone 67 cents and you have quarters, dimes, nickels, and pennies, the greedy approach is what you'd do naturally:

- Grab the biggest coin you can: a quarter. You still owe 42 cents.

- Grab another quarter. You still owe 17 cents.

- A quarter is too big now. Grab the next biggest: a dime. You still owe 7 cents.

- A dime's too big. Grab a nickel. You owe 2 cents.

- Grab a penny. You owe 1 cent.

- Grab one last penny. You're done.

For standard US currency, this strategy is flawless every single time.

A greedy algorithm aims for a locally optimal solution at each stage with the hope of finding a global optimum. It's a powerful tool for optimization problems where a series of "good enough" choices can lead to an excellent overall result.

When to Be Greedy #

This approach is incredibly fast and efficient, which makes it perfect for certain backend jobs. For example, a job scheduling system might use a greedy algorithm to always run the shortest job first, which minimizes the average wait time for all users. In network routing, it could find a path by always choosing the next hop with the lowest latency.

However, you absolutely have to know when a greedy strategy will backfire. Imagine your coin system also had a 12-cent coin, and you needed to make 16 cents in change. A greedy algorithm would grab the 12-cent coin, leaving 4 cents. It would then add four 1-cent coins, for a total of five coins. But the truly optimal solution was two 8-cent coins (if they existed), or another combination the greedy choice blinded you from seeing.

This highlights the trade-off: greedy algorithms give you speed, but they don't guarantee the absolute best solution for every type of problem. Learning to spot which problems are a good fit for this approach is a crucial skill in algorithms with Python.

Alright, we've spent a lot of time with data that sits neatly in a line. But let's be real—most of the interesting problems out there involve data that's messy, interconnected, and all over the place.

Think about a social network, your family tree, or the web of flights connecting different cities. This isn't linear data. It's defined by its relationships. To get a handle on it, we need to move past simple lists and arrays and into the world of non-linear data structures.

Two of the most important ones you'll ever learn are trees and graphs. Once you know how to model and traverse these structures, you unlock a whole new level of problem-solving for complex backend challenges using algorithms with Python.

Introducing Trees: Hierarchical Data #

Picture a company's org chart. You've got the CEO at the very top, a layer of VPs reporting to them, directors under the VPs, and so on down the line. That's a perfect real-world example of a tree. It's a hierarchical structure with a single starting point (the root) and branches connecting to other points (nodes).

The big rule for trees is that there are no loops. A manager can't report to someone who reports back to them. This clean, simple structure is surprisingly powerful for representing things like file systems (folders inside of folders) or the nested structure of an HTML document.

A special kind of tree you'll see everywhere is the Binary Search Tree (BST). In a BST, every node has at most two children, and it follows one strict rule:

- All values in a node's left subtree must be less than the node's value.

- All values in its right subtree must be greater than the node's value.

This ordering makes searching ridiculously fast. Just like a binary search on a sorted list, you can chop the search space in half at every step. This gives you an average search time of O(log n), which is why BSTs are fantastic for tasks that need constant lookups, insertions, and deletions, like indexing data in a database.

Graphs: The Ultimate Network Model #

So, what happens when your data doesn't fit into a neat hierarchy? What if any point can connect to any other point? That's where graphs come in. They are the ultimate data structure for modeling networks.

A graph is just a collection of nodes (also called vertices) connected by edges. Think of:

- A Social Network: People are the nodes, and a friendship is an edge.

- Flight Routes: Airports are the nodes, and a direct flight is an edge.

- The Internet: Web pages are the nodes, and hyperlinks are the edges.

Unlike trees, graphs can absolutely have cycles. You can be friends with someone who's friends with someone else, who is friends with you—that's a loop. Graphs are the backbone for solving some of the most fascinating and difficult problems in computer science.

How to Traverse a Graph #

Once you've got your data in a graph, the most common thing you'll do is explore it. We call this graph traversal. The two fundamental algorithms you need to know are Breadth-First Search (BFS) and Depth-First Search (DFS).

Choosing between BFS and DFS depends entirely on the question you're asking. Are you looking for the shortest path between two points, or do you just need to explore every possibility? Your answer determines which traversal algorithm is the right tool for the job.

Let's use a maze analogy to make the difference crystal clear.

Breadth-First Search (BFS): Explores Level by Level

Imagine you're standing at the entrance of a maze. If you use a BFS approach, you'd first check all paths that are just one step away. Then, from all of those spots, you'd explore all paths that are two steps away, and so on. You explore the maze in expanding, concentric circles.

BFS is guaranteed to find the shortest path between two nodes in a graph where all edges have the same weight. This makes it perfect for:

- GPS Navigation: Finding the route with the fewest turns.

- Social Networks: Finding the shortest connection path between two people (e.g., "friends of friends").

- Web Crawlers: Discovering all pages within a certain number of clicks from a starting page.

Depth-First Search (DFS): Explores One Path to the End

Now, back at the maze entrance, let's try a DFS approach. You'd pick a single path and follow it all the way until you hit a dead end. Once you hit that wall, you backtrack to the last intersection where you had a choice and try the next unexplored path. You dive deep first, then pull back.

DFS is fantastic for problems where you need to check if a path exists at all, or when you need to explore every single possibility. Its use cases include:

- Solving Puzzles: Finding a way out of a maze or solving a Sudoku.

- Topological Sorting: Figuring out a valid order to complete tasks with dependencies, like a software build process.

- Detecting Cycles: Checking if a graph has any loops, which is critical in dependency graphs to avoid getting stuck in an infinite loop.

These non-linear structures are at the very heart of many complex backend systems. Learning to implement tree and graph algorithms with Python opens up a completely new world of problem-solving, letting you model and analyze the connected, messy data that actually runs the world.

Alright, let's connect the dots between the algorithms we've been learning and the actual work you'll be doing as a backend engineer. It's one thing to understand how an algorithm works on a whiteboard, but it's another thing entirely to see it solve a real-world performance bottleneck.

This is where the theory becomes practical. Your grasp of searching, sorting, and graph traversal is what allows you to build the fast, scalable, and smart systems that companies rely on.

Let's dive into some concrete backend scenarios where these algorithms aren't just academic exercises—they're the tools of the trade. We’ll see how they show up in APIs, social features, and data pipelines.

This isn't a niche skill, either. Python's power in this area is why it's become a dominant force in the industry. In fact, over 151,000 verified companies use Python, powering a staggering $650 billion in collective revenue. This isn't just startups; we're talking about giants like Walmart and NVIDIA who use it for everything from logistics to machine learning. You can see for yourself how deeply Python is embedded in major corporations to get a sense of the scale.

Scenario 1: Optimizing API Performance With Efficient Search #

Picture this: you’re building a REST API for a growing e-commerce site. A key feature is the /products/search endpoint, which lets users find products by name.

A beginner's approach might be to fetch every single product from the database and then loop through the list in Python to find what matches the user's query. This is a classic linear search. And for 100 products, it works just fine.

But what happens when the store has 100,000 products and thousands of people are searching at once? That endpoint will slow to a crawl. The database will be overloaded, and your API response times will become painfully slow. The user experience will be terrible.

This is where a savvy backend developer immediately sees a search problem. By making sure the products are indexed and sorted alphabetically by name, you can switch to a binary search. Better yet, you can let the database's own optimized query engine handle it, which uses similar principles. This one change drops the search complexity from O(n) to a blistering O(log n). Suddenly, the system can handle massive inventory growth with almost no slowdown in search speed.

Scenario 2: Building a Friend Recommendation Engine #

Let's tackle another common feature: the "people you may know" engine you see on social media. At its core, this is a problem about relationships and connections, which makes it a perfect use case for a graph data structure.

Think of it like this: every user is a node (or a vertex) in the graph. When two users are friends, we draw an edge between their nodes.

To find friend suggestions for a user, you can run a Breadth-First Search (BFS) starting from that user's node:

- Level 1: The BFS first finds all the user's direct friends.

- Level 2: It then moves outward to find their friends (the "friends of friends").

- Filtering: Finally, you filter out anyone the original user is already connected to.

The people left over at Level 2 are your best candidates for recommendations. The BFS is incredibly efficient at exploring this social network layer by layer, finding the closest connections who aren't yet friends.

Scenario 3: Processing Data for a Business Dashboard #

Imagine you're tasked with building the backend for a business intelligence (BI) dashboard. Every morning, you get a massive, completely unsorted file of the previous day's sales transactions. A manager needs to see the top sales regions, products, and employees, all neatly sorted by revenue.

Your job is to turn that chaotic data dump into something useful. This is where sorting algorithms become your best friend.

For instance, you might use a stable sort like Merge Sort to first organize transactions by region, and then by employee within each region. The "stable" part is key—it ensures that if two employees have the same sales, their original relative order is kept, which can be important. For a simple sort by total revenue, Quick Sort might be a better choice for its fantastic average-case speed.

By choosing the right algorithm for the job, you transform raw data into an organized, actionable view that people can use to make real business decisions. If you're dealing with a dataset so large it won't even fit in memory, you can get even more advanced. Check out our guide on using generators in Python for handling huge data streams efficiently.

These scenarios show that algorithms aren't just interview trivia. They are the fundamental tools you'll use daily to solve performance bottlenecks, create intelligent features, and manage data at scale.

Your Roadmap to Interview Success and Beyond #

Alright, you've made it through the weeds of sorting, searching, and graph traversals. Now for the most important part: turning all that theory into a job offer. Let's talk about how to bridge the gap from practice problems to actually acing the technical interview.

The next move is to get your hands dirty on platforms like LeetCode and HackerRank. But listen, don't try to solve every single problem on the site. That’s a fast track to burnout. The real goal is pattern recognition.

From Practice to Portfolio #

Start by working through problem lists organized by algorithm type. Spend a week focused entirely on array problems. Then a week on binary search. Then another on sliding window problems. This repetition builds the mental muscle you need.

The point isn't to memorize solutions. It’s to get to a place where you see a new problem and your brain instantly goes, "Ah, this smells like a graph problem," or "A greedy approach would probably nail this." That instinct is the most valuable skill you can possibly build.

As you knock out these problems, make sure you're pushing your code to a dedicated GitHub repository. This isn't just about keeping a record; it’s about building a living, breathing part of your portfolio. It’s tangible proof that you can solve problems and write clean, efficient code.

Acing the Technical Interview #

Here's the thing about the technical interview: it's not a test, it's a performance. Getting the right answer is only half the battle. The other half is showing how you think.

As you work through a problem, you have to narrate your thought process out loud. Talk about your initial gut reactions, the different angles you're considering, and why you’re choosing one data structure over another. You’re being evaluated on your communication as much as your code.

And you absolutely must be ready to talk complexity. Once you've got a solution, you should be able to confidently state its time and space complexity using Big O notation and defend your choices. Explain the trade-offs. For example, be ready to say, "I went with a hash map here, which uses O(n) space, because it gets us an O(n) time complexity, which is much better than the O(n^2) brute-force approach."

For a deeper look into the career itself, you might find our guide on how to become a backend developer useful.

Ultimately, a strong portfolio combined with clear, confident communication is what seals the deal. Showing you can not only build features but also explain your technical decisions with clarity is what turns an interview into a job offer.

Frequently Asked Questions About Python Algorithms #

Getting into algorithms can feel like learning a whole new language. It’s normal to have questions. Here are the straight answers to some of the most common ones I hear from developers just starting out.

Can I Get a Backend Job Without Knowing Algorithms? #

Let's be direct. You might be able to snag a junior role that's mostly about basic scripting, but you'll hit a ceiling fast. It's almost impossible to get hired at a competitive company—or get promoted—without a solid understanding of algorithms and data structures.

Think about it from the employer's perspective. They don't ask you to reverse a linked list to be difficult. They're testing how you think. Can you write code that doesn't fall over when traffic 100x's? Do you instinctively reach for a solution that’s efficient and scalable, or one that will grind the database to a halt? That's what they're really asking.

Why Use Python for Algorithms Instead of C++ or Java? #

Because Python lets you focus on the algorithm, not the ceremony. Its clean, readable syntax means you spend your brainpower on the logic of the solution itself, not on wrestling with complex memory management or pages of boilerplate code.

Python's superpower is its expressiveness. You can sketch out a complex idea like a graph traversal or binary search in just a few lines of code. This makes it way easier to learn, debug, and—crucially—explain your thought process during a technical interview.

Sure, for tasks where every nanosecond counts, a team might reach for C++. But for the vast majority of backend development, and especially for learning and interviewing, Python's blend of simplicity and power is the best tool for the job.

How Much Math Do I Really Need to Know? #

You can put away your advanced calculus textbook. You don't need a math degree, but you can't ignore it completely either.

The most important concept to get comfortable with is logarithms. They are everywhere in complexity analysis, and you absolutely need to understand them to grasp why an algorithm like binary search is so efficient (O(log n)).

Beyond that, a working knowledge of discrete math concepts—like sets, graphs, and basic probability—will serve you well. The goal isn't to write academic proofs; it's to have the mental tools to analyze a problem and choose the right approach.

Ready to turn theory into a job-ready portfolio? Codeling provides a structured, hands-on curriculum that takes you from Python fundamentals to building complete backend applications. Stop watching and start building at https://codeling.dev.