Django REST API Tutorial

Django REST Framework is popular for good reasons. It has existed since 2011, is widely used in production, and has a mature ecosystem.

Most advice about a django rest api tutorial is wrong in one specific way. It teaches you how to finish a demo, not how to build an API you can defend in a code review.

You can follow a video, wire up a ModelViewSet, click around the browsable API, and still have no clue why your endpoints are shaped the way they are, where validation belongs, or how you'd change the design once a product manager asks for versioning, permissions, and tests. That's the trap. It produces repositories full of copied patterns and very little engineering judgment.

A serious API project looks different. It has a clear contract, deliberate models, validation that lives in the right layer, permissions that reflect real access rules, tests that protect behavior, and a workflow that survives beyond your laptop. That is what employers notice when they open your GitHub.

Why This Is Not Just Another Django REST API Tutorial #

Fast results make for good demos and bad habits.

A lot of beginner tutorials are built around a single moment: the first 200 OK and a page full of JSON. That milestone matters, but it is a weak proxy for backend skill. Plenty of junior developers can follow a code-along, generate CRUD endpoints, and still struggle the first time a real project needs idempotency, object-level permissions, auditability, or a breaking schema change.

Django REST Framework is popular for good reasons. It has existed since 2011, is widely used in production, and has a mature ecosystem around serializers, viewsets, authentication, and testing. JetBrains' 2023 Python Developers Survey reported that Django REST Framework is used in 70% of Django projects they surveyed, which helps explain why so many learning resources default to it (JetBrains Python Developers Survey 2023). Popular tools reduce setup friction. They do not make design decisions for you.

That distinction matters early.

A portfolio API that earns respect in code review usually starts before views.py or serializers.py. It starts with questions about resource boundaries, data ownership, invariants, and failure cases. If those decisions are fuzzy, the code can still look clean while the API contract remains weak. I have reviewed projects with tidy DRF abstractions and no clear answer to a basic product question: "What behavior are we promising clients?"

Practical rule: Build the contract first. Then choose the DRF abstractions that fit it.

If you need to refresh the request-response model before getting into API design, read this explanation of how APIs work. That context is more useful than memorizing class names because it clarifies what the server owes the client, and where your backend code sits in that exchange.

What a hireable project actually signals #

Hiring managers and senior engineers do not get excited because a tutorial app "works." They look for judgment. They want evidence that the project could survive one month of real use, a second contributor, and a few changed requirements without collapsing into patches.

That usually shows up in a few concrete ways:

- Clear boundaries: business rules are not scattered across models, serializers, and views without a reason.

- Deliberate validation: field validation, cross-field validation, and database constraints each live in the layer that can enforce them reliably.

- Test coverage with intent: tests protect behavior that matters, not just happy-path endpoint clicks.

- Operational awareness: authentication, permissions, environment configuration, and deployment are treated as part of the project, not cleanup work for later.

- Documentation that helps another engineer: setup, assumptions, and API behavior are easy to verify without reading the whole codebase.

A small API built with that mindset does more for your portfolio than ten cloned tutorials. It shows that you can write code, but also that you can make backend decisions other engineers can trust.

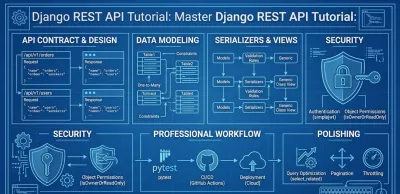

Laying the Foundation Your API Architecture and Design #

A weak API usually does not fail because the developer picked the wrong serializer class. It fails earlier, during design, when routes mirror database tables, permissions get added as patchwork, and no one defines which rules belong to the domain versus the transport layer.

Start with the contract, not the framework #

Opening views.py first feels productive. It usually creates rework.

Start with resource design and write down the contract before implementation. That habit forces decisions early, while they are still cheap to change. It also keeps the API shaped around client behavior instead of whatever fields happened to land in the first model draft.

The first questions are usually architectural, not syntactic:

- What are the core entities? Orders, projects, tasks, users, invoices.

- What relationships matter? One-to-many, many-to-many, ownership, lifecycle dependencies.

- What actions belong to the resource? CRUD covers the baseline, but real systems often need domain actions such as approve, archive, publish, or cancel.

- What should clients never see? Internal fields, audit metadata, security-sensitive values.

A simple contract table catches a surprising number of design mistakes before they turn into migrations and endpoint churn.

| Concern | Good question |

|---|---|

| Resource | What noun does this endpoint represent? |

| Input | What fields may a client send? |

| Output | What fields should the API expose? |

| Rules | What invariants must always hold? |

| Access | Who can list, read, create, update, delete? |

If you want a practical reference for route naming, status codes, filtering, and versioning, review these REST API design best practices for maintainable endpoints before you lock in URL patterns.

Model the domain before the database #

Junior developers often model data by adding fields until the form submits. Professional API work is stricter. The schema has to express business truth clearly enough that serializers, views, and tests are not compensating for weak assumptions.

A good model answers hard questions early. Can a canceled subscription return to trial? Should invoices survive account deletion? Is soft delete protecting auditability, or is it hiding poor retention decisions? Those are domain choices first and Django choices second.

Database constraints matter here. So do naming choices.

If a rule must always hold, enforce it in the lowest reliable layer. Use database constraints for invariants that cannot be violated. Use model methods or domain services for state transitions that involve business logic. Use serializer validation for request-shape and API-facing feedback. Teams that blur those responsibilities usually end up debugging the same rule in three places.

Choose the framework for the working style you want #

DRF is still the default for a reason. It has mature conventions, broad community support, and a lot of hiring-market relevance. If you join an existing Django backend team, there is a good chance you will touch DRF.

Django Ninja is worth examining too, especially if you prefer schema-first design, strong type hints, and automatic OpenAPI output with less ceremony. The trade-off is not "old versus new." The trade-off is convention depth versus lighter structure.

A practical comparison looks like this:

| If you value | DRF tends to fit | Django Ninja tends to fit |

|---|---|---|

| Ecosystem maturity | Strong | Growing |

| Conventional CRUD structure | Excellent | Good |

| Serializer-heavy workflow | Natural | Less central |

| Type-hint-first design | Possible | Core experience |

| OpenAPI-first ergonomics | Good with extra tooling | Strong by default |

I usually recommend DRF for a portfolio project when the goal is to show employers that you understand common production patterns in Django. I recommend taking a serious look at Ninja when the project benefits from typed request and response schemas, and you want the API contract to stay close to the function signatures. The right choice depends less on trend and more on how your team wants to express validation, documentation, and endpoint behavior.

The key habit is intentionality. A portfolio API stands out when the architecture shows restraint, clear boundaries, and decisions another backend engineer can extend without rewriting half the project.

Implementing the Core Logic Models Serializers and Views #

Good API code usually looks uneventful. That is a sign the architecture is doing its job.

By the time you reach models, serializers, and views, the main design decisions should already be settled. Resource boundaries, ownership rules, naming, and error behavior should not still be drifting. If they are, implementation turns into product design by accident, and that usually produces code you cannot trust six weeks later.

Models are the source of truth #

A Django model does more than map to a table. It defines rules the rest of the application assumes are true.

That is why business invariants belong close to the model layer. If an order cannot move from cancelled back to paid, or a task must always belong to a project, those rules should not depend on whether the request came from one particular endpoint. Put those constraints where every code path has to respect them.

Two habits improve model design fast:

- Keep invariants close to the data: use model constraints, clean methods, or clearly named domain methods for rules that must hold everywhere.

- Be strict about nullability: every nullable field adds another state your API, tests, and client code now have to handle.

- Model relationships explicitly: good foreign keys and related names make query behavior and serializer design much clearer later.

A junior project often treats the model as passive storage and pushes logic upward into views. Production code pays for that choice with duplicated checks, inconsistent behavior, and painful refactors.

Serializers define your public contract #

Serializers are where API design becomes visible. They decide what the client may send, what the client may read back, and which validation errors appear at the boundary.

A basic DRF example from GeeksforGeeks shows why teams like this workflow. A small Book API can expose a few fields cleanly and avoid hand-written JSON parsing by using serializers and viewsets (GeeksforGeeks example and discussion). The productivity gain is real. The risk is that convenience encourages developers to expose database structure directly and call it an API design.

That shortcut is fine for a demo. It is weak for a portfolio project.

Use serializers with intent:

- Expose only fields the client needs: internal status flags, audit fields, and implementation details often belong out of the public response.

- Validate inputs at the boundary: field shape, cross-field checks, and request-specific rules fit naturally here.

- Split read and write serializers when the representation differs: a rich nested response and a minimal write payload do not need to share one class.

- Treat serializer names as part of the design:

TaskCreateSerializerandTaskDetailSerializercommunicate more than a genericTaskSerializerthat tries to do everything.

One practical test helps here. If renaming a database column would break your API contract, the serializer layer is too thin.

Views and viewsets should coordinate HTTP behavior #

Views should handle request flow, choose the serializer, call the right queryset, and return the response. They should not become the place where every business rule, side effect, and special case gets buried.

ModelViewSet is a good default for conventional resources because it keeps common CRUD endpoints consistent and reduces boilerplate. That does not mean every endpoint deserves a viewset. A portfolio-worthy API shows judgment. Use the high-level abstraction when the resource behavior is standard. Drop to generic views or explicit API views when the workflow has real domain steps, unusual query patterns, or action-specific rules.

A useful rule set looks like this:

- Use ModelViewSet for ordinary create, list, retrieve, update, and delete behavior.

- Use generic class-based views when you want DRF structure without the full action surface.

- Use custom service functions or model methods when a request triggers business work that should be reusable outside HTTP.

- Override

get_queryset()andperform_create()sparingly. If those methods start carrying policy, branching rules, and side effects, the view is doing too much.

I usually tell junior developers to read views by asking one question: does this class explain HTTP, or does it explain the business? If it explains the business, the abstraction boundary is slipping.

A maintainable split looks like this:

- Model: data rules, relationships, persistent truth

- Serializer: input validation and output shape

- View: HTTP orchestration

- Service layer or domain helper: multi-step business actions and workflows

That separation does not make the tutorial app feel more exciting. It makes the codebase easier to test, easier to review, and much easier for another engineer to extend without rewriting the whole API.

Fortifying Your API Authentication and Permissions #

Security design shapes the API long before deployment. Teams that treat auth as a last-mile feature usually end up patching permission bugs in views, serializers, and frontend code because the trust model was never made explicit.

Know the difference between identity and authority #

Start with two separate questions.

- Authentication answers who the caller is.

- Permissions answer what that caller is allowed to do.

That distinction sounds basic, but it drives architecture. If a codebase mixes the two, permission checks get scattered across endpoints and become hard to audit. A user may be valid, authenticated, and still forbidden from updating a record they do not own. Professional API work depends on making that rule obvious in code.

For many internal APIs, session auth or simple token auth is enough. For SPAs and mobile clients, JWT with simplejwt is a common choice, as summarized in this Django REST API guide. The better question is not "what is popular?" It is "what failure mode can this system tolerate?" JWT reduces database lookups per request, but it makes revocation and rotation more important. Session auth is simpler to invalidate, but it fits browser clients better than third-party consumers.

Permissions should mirror the domain #

Good permissions read like policy, not improvisation.

A healthy default is least privilege. Instead of asking whether a logged-in user can hit an endpoint, ask whether that specific user should perform that action on that specific object. That usually leads to clearer APIs and fewer accidental data leaks.

A simple model helps:

| Layer | Question |

|---|---|

| Authentication | Who is calling? |

| Global permission | May this caller access this endpoint at all? |

| Object permission | May this caller act on this resource instance? |

Rules like IsOwnerOrReadOnly are useful because they encode ownership directly. They also force the team to define what "owner" means in the model instead of hand-waving the decision in the view. Microsoft documents the impact of explicit access control in its guidance on implementing least privileged access, including reported security compliance improvements of up to 85% when organizations reduce unnecessary permissions.

I usually recommend one habit early. Write at least one test for every "should not be allowed" case. Teams catch permission mistakes faster that way than by reviewing happy-path code. If you need a refresher on structuring those tests cleanly, this guide on writing unit tests in Python is a solid starting point.

Secure APIs are usually boring. The rules are explicit, repeated consistently, and easy to inspect.

CORS is often the real integration bug #

Frontend developers often report authentication failures when the browser is blocking the request before auth logic runs. That is a transport policy problem, not an identity problem.

MDN's CORS guide explains the mechanics clearly, and industry surveys such as the Postman State of the API report have repeatedly shown that CORS and authorization setup are common causes of early integration friction. In practice, the fix is usually straightforward. Keep allowed origins explicit, avoid wildcard settings with credentials, and test preflight behavior before debugging tokens.

A short walkthrough can help anchor the concepts before you implement them:

For a first serious API, keep the security baseline strict:

- Choose one auth flow deliberately. Mixing session auth, token auth, and JWT too early creates debugging noise.

- Write permission rules per resource. "Authenticated users only" is usually too broad for anything with user-owned data.

- Test denied paths. A

403is just as important to verify as a200. - Separate secrets from code. Local convenience should not dictate production settings.

- Review defaults in every environment. Debug flags, permissive CORS settings, and broad allowed hosts often survive longer than expected.

The goal is not maximum complexity. The goal is a security model another engineer can read, test, and trust.

The Professional Workflow Testing CI and Deployment #

A surprising number of Django REST API tutorials teach the least convincing part of backend work: getting a 200 OK on localhost and calling it done.

Professional API work starts after the first successful response. The difference shows up in the repository. Can another developer run the project without Slack messages? Do tests catch a broken serializer before it reaches production? Does deployment depend on one person's laptop history, or on documented, repeatable steps?

Those questions matter in hiring and in real teams. Employers rarely care that you can follow a CRUD walkthrough. They care that you can ship code that survives change.

Testing changes the shape of your code #

Tests are not just a safety net. They are a design tool.

I usually tell junior developers to watch for friction while writing tests. If an endpoint is painful to test, the code is often telling you something useful. The view may be doing validation, orchestration, and side effects all at once. Business rules may be buried inside serializer methods. The setup may depend on global state, implicit fixtures, or hard-coded settings.

That is why strong API tests focus on behavior and boundaries, not just line coverage:

- Request and response contracts. Status codes, payload structure, validation errors, and edge cases.

- Authorization rules. Which users can read, create, update, or delete a resource.

- Domain behavior. State transitions, duplicate submissions, and side effects such as emails or audit records.

A good suite makes refactoring cheaper. Without tests, every cleanup becomes a bet against regression.

If you want to strengthen the habit behind this work, this guide to writing unit tests in Python complements API-specific testing well.

CI proves the project works outside your machine #

Local tests are useful. Automated tests on every push are much more persuasive.

GitHub Actions, GitLab CI, or any similar tool solves the same professional problem. The repository should answer a simple question by itself: did the change break the API contract? That check should happen before anyone reviews a pull request or deploys a branch.

For a portfolio project, the pipeline does not need to be elaborate. A clean setup usually runs formatting checks, executes the test suite, and fails fast when dependencies or settings drift. That alone shows good engineering habits because it treats software as a repeatable system instead of a personal setup.

A lightweight workflow usually includes:

- Small, readable commits. They make bugs easier to isolate and code review easier to follow.

- Automated test runs on push and pull request. Broken code gets caught early.

- Separate settings for local, test, and production. Environment mistakes are one of the easiest ways to ship avoidable bugs.

- Pinned dependencies. Reproducible installs matter when someone else clones the repo six months later.

Deployment readiness is part of the design #

Deployment is not an ops afterthought. It exposes whether the project was structured clearly from the beginning.

A backend project becomes easier to trust when setup is explicit: environment variables are documented, migrations run cleanly, startup commands are predictable, and the app behaves the same way in development and production. Docker can help here, not because every small API needs containers, but because containers force you to define the runtime instead of relying on tribal knowledge.

There is also a trade-off worth stating clearly. More infrastructure does not automatically make a project look senior. A simple deployment with clear environment separation, documented commands, and a reproducible build is stronger than a flashy cloud setup nobody can explain.

A portfolio-ready API repository should make these signals obvious:

| Signal | What it tells employers |

|---|---|

| Tests pass in CI | You understand verification |

| README includes setup and API notes | You communicate with teammates |

| Environment config is separated | You understand deployability |

| Git history is clean | You work incrementally, not chaotically |

Some developers prefer assembling this workflow from documentation, open-source examples, and repeated trial and error. Others want a more structured practice environment. Codeling offers browser-based exercises and synchronized local projects around Python API development, which can help if your goal is to build portfolio work with more repetition and less passive watching.

A backend project is credible when another developer can pull it, run it, verify it, and change it with confidence. That is the standard worth building toward.

Polishing Your API for Production and Portfolios #

A CRUD demo proves you can follow instructions. A portfolio API that stands up to review proves you can make engineering decisions.

The difference usually shows up in the parts beginners postpone. Query behavior. traffic shaping. documentation that another developer can use without reading your code first. These are not cosmetic improvements. They show whether you understand how an API behaves after the tutorial ends.

Performance work starts with query discipline #

The fastest way to make a Django REST API look junior is to ignore query count. A list endpoint can appear correct in local testing while performing one query for the base queryset and another for each related object. That pattern is common enough that Sentry calls out N+1 queries as a frequent performance issue in database-backed applications and recommends eager loading related data where appropriate (Sentry on the N+1 problem).

I tell junior developers to start performance review at the serializer boundary. DRF makes it easy to expose related data. It also makes it easy to hide expensive access patterns behind clean-looking Python code. If a serializer touches author.profile, comments, and tags, the view queryset has to be designed for that representation. Otherwise the endpoint works correctly and still scales poorly.

A few habits help:

- Inspect list endpoints before detail endpoints. They multiply bad query patterns faster.

- Use

select_relatedfor single-value joins andprefetch_relatedfor collections. They solve different problems. - Check query counts in tests or Django Debug Toolbar during development. Performance should be inspected, not guessed.

- Keep serializer fields honest. Every nested field has a database cost unless you shape the queryset around it.

Reviewers notice this because query discipline reflects architectural discipline. It shows you can connect the API contract to the data access plan.

Pagination and throttling show production judgment #

Unbounded list endpoints are a design mistake, not a missing enhancement. Pagination protects response time, memory use, and database load. It also gives clients a stable way to consume data without reverse-engineering your defaults.

Throttling solves a different class of problem. It limits scraping, accidental traffic spikes, and noisy clients before they turn into an operations issue. Django REST Framework includes throttling classes for exactly this reason, and the official docs show how to apply AnonRateThrottle and UserRateThrottle at the global or view level (DRF throttling documentation).

The trade-off matters. Aggressive throttling can frustrate legitimate consumers. Loose throttling can leave the API exposed to avoidable abuse. A good portfolio project makes the policy explicit and documents it. Even simple choices such as page size limits and anonymous request caps signal that you are designing for real use, not only for a screenshot.

Documentation is part of the product #

API documentation is operational tooling. If another developer cannot discover how authentication works, what a validation error looks like, or which fields are writable, the API is unfinished.

Good docs also force better design. I have rewritten endpoints after trying to document them because the naming was vague or the request shape was doing too much. That is a useful pressure test. If an endpoint takes three paragraphs to explain, the interface probably needs work.

A portfolio-ready API usually includes:

- Clear endpoint descriptions. State what the route does and which roles can access it.

- Authentication instructions. Show how to obtain credentials and send them correctly.

- Request and response examples. Make common flows easy to test from curl, Postman, or the browsable API.

- Operational constraints. Document pagination defaults, throttle limits, validation rules, and important edge cases.

As noted earlier, some developers use structured practice platforms to build these habits through repeated implementation instead of passive watching. That can help, but the signal employers care about is simpler. Can they clone the project, understand the API surface, and trust the choices behind it?

That final polish is what turns a tutorial exercise into a credible backend sample.